Recently I captured 180,000 photos in 13 hours, of Dundee’s historical burial grounds. This was achieved with 8 continuously capturing Raspberry Pi cameras that were attached to a home made camera rig. These photos were then put through a photogrammetry software to generate a virtual 3D model of the area. This model will be used in cartography, digital heritage, and virtual reality.

In this blog post I discuss:

- why I performed this experiment and what I hoped to learn;

- the equipment used;

- how the capture method evolved during the experiment and how it could be improved further;

- the concept of the 3D model and its relevance to subsequent Howff digitisation efforts.

Experiment history and aims

At the end of last year and the start of this year I spent some time in Dundee city centre using a handheld DSLR camera to capture 40,000 photos. These photos were put through a photogrammetry (3D-scanning) software to generate a virtual model of the city centre, for example as shown in this flythrough of a point cloud made of 1 billion points:

Also generated were a series of orthographic projections, derived from solid textured meshes, for example this architectural elevation of 38 Seagate:

I am currently engaged in the architectural and geometrical analysis of the buildings in this area, working towards the reproduction of the city centre area as a full scale, high quality CAD (computer assisted design) model – the type of model that could be used in real time applications such as virtual reality (VR). The type of image shown directly above serves as a scale reference in authoring the building models that will populate that final VR environment.

The photo capture for the 40,000 DSLR photos was around 1,000 per hour, the total capture taking around 40 hours. So part of my motivation in performing the Howff Pi capture has been investigating how to increase capture rate and decrease capture duration (i.e. how to take more photos in less time). Straight off, there are two obvious ways to increase capture rate, being (1) use more cameras simultaneously, and (2) increase the capture rate per camera.

The very first day I tried photographing the Howff with a single handheld DSLR (26th July 2019) I captured 11,500 photos in 3 hours. These photos turned out to be non-viable due to poor choice of capture location (too great a distance between respective camera positions), so that was a 3 hour learning experience. But it showed that it was physically possible to sustain a DSLR capture rate of at least 3,500/hour (which would maybe realistically come down to 2,500/hour when accounting for time taken to change camera position).

Additionally, I was interested to see how viable Raspberry Pi camera photos would be for use in photogrammetry. It was clear that DSLR photos worked fine, and I had previously produced a model of Edinburgh’s New Town area derived from 15,000 photographs captured with a Samsung S7 smartphone (see https://sketchfab.com/DanielMuirhead/collections/edinburgh-city-centre-2018-q4):

I am very keen on using equipment that is financially accessible to as many citizens as possible, and a unit comprised of Raspberry Pi and camera is about as low cost as they come. The other comparable low budget option would be a cheap action camcorder, recording a 1080p video (that could be decomposed into a series of stills to serve as ‘photo’ inputs). A further consideration that guided me towards Raspberry Pi is that I am a STEM Ambassador. So anything involving Raspberry Pi hardware and coding that culminates in an experiment that may be replicated by a community or school group, gets the thumbs up. Also, prior to this experiment I had zero experience or training in either Raspberry Pi or coding, so this was a great learning opportunity!

The last issue I was seeking to explore through this experiment, and one that I am still actively researching and developing, is how to quantify an optimal capture trajectory for a given camera rig when encountering a given subject geometry. By way of explanation: photographing a spherical subject (for photogrammetry) will involve a different capture approach than photographing something shaped like a plane (e.g. a long and tall brick wall), and in turn, capturing a subject with multifaceted and complex geometry will demand a more complex camera trajectory.

If capturing photos for photogrammetry is a game, and we consider each photograph captured as a move in that game (whose capture involves expenditure of time and labour), and the winner is the player who makes the least moves, then how do we get better at playing that game (how do we spend as least time and energy in capturing all the required photos)?

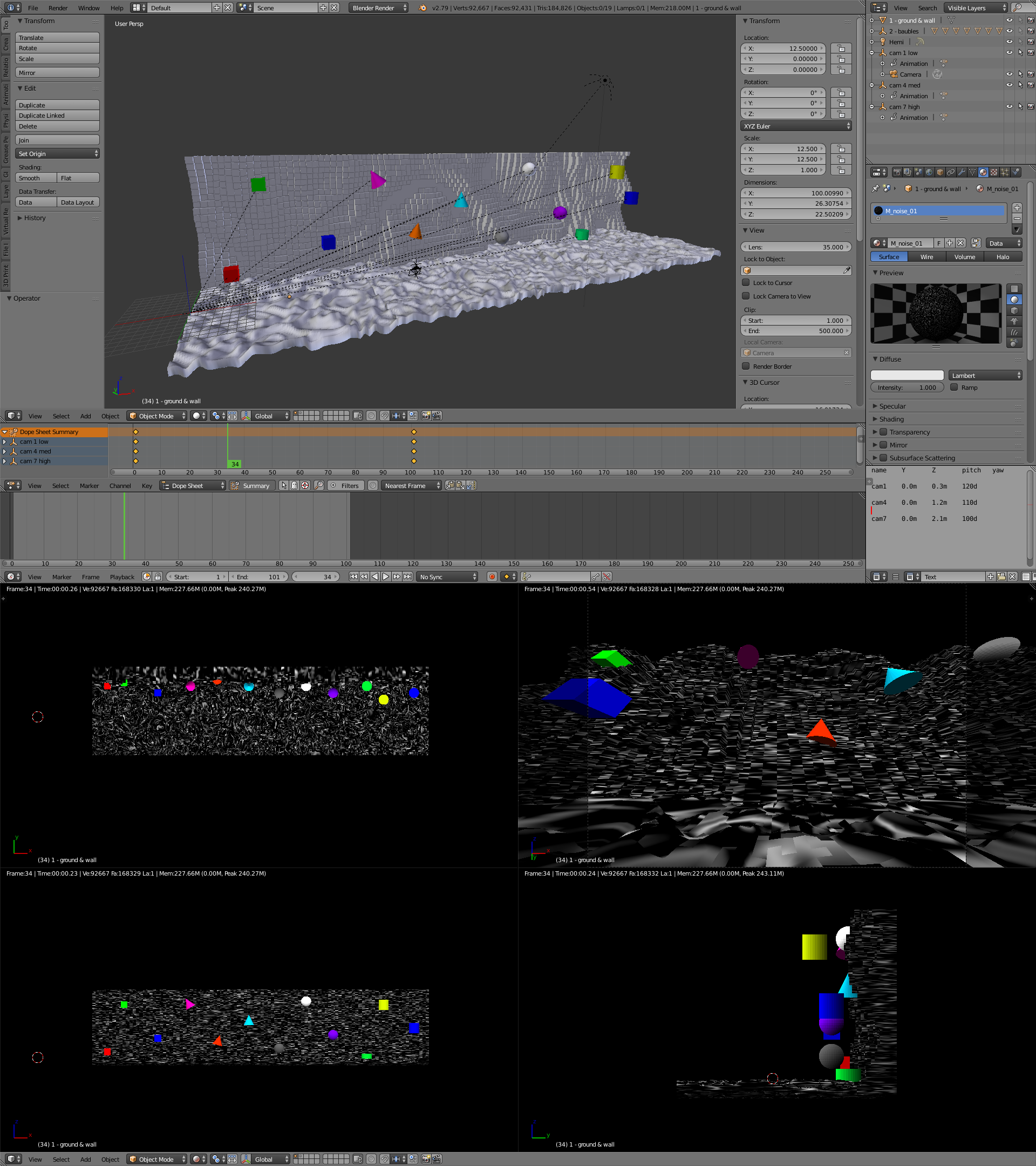

I have been using Blender to run simulations of multi-camera rigs encountering subjects with simple geometry, tweaking the rig trajectory for each simulation, then quantifying the fidelity of the reconstruction as a function of that trajectory. Running this Raspberry Pi multi-cam capture experiment marks my transition from running a simulation to field-testing in the real world. This single experiment is not going to solve that problem of how to most efficiently perform photo capture for photogrammetry, but it is a step in that direction. Here is a screenshot of one of my simulations set up in Blender v2.79:

So to summarise, through this experiment I aimed to:

- explore the use of a multi-camera rig for faster photo acquisition (compared to single camera);

- gauge the viability of Raspberry Pi cameras for use in photogrammetry;

- start real world field-tests of optimal capture trajectory.

Equipment

Capture device

Here is a photo of the camera rig in its most recent iteration, taken after the last capture session:

Cameras:

- 8x Raspberry Pi Zero W

- 4x Raspberry Pi Camera Module V2 (max. resolution 3280*2464px/8MP, later reduced to 2592*1944px/5MP to match Wide Angle cameras)

- 4x Raspberry Pi Zero Camera Module, Wide Angle (by Zerocam) (resolution 2592*1944px/5MP)

- 8x 32GB MicroSD cards (later upgraded to 8x 64GB, prep. of larger sized microSD informed by this webpage)

- 3x portable USB battery charger packs (i.e. the type used to charge a mobile phone when out and about), each with 3 USB outputs

- 8x 1 metre USB to micro-USB cables (to connect Pi to battery) (later upgraded to 2 metre cables).

Each Pi was programmed with a timelapse photo capture that activated as soon as the Pi was powered on (i.e. when out in the field, as soon as it was plugged into the battery pack). My programming of the cameras was only made possible thanks to the vast amount of tutorials and guides other people have helpfully made available for free online. Here is a screenshot of my internal documentation regarding programming the Pi cameras:

After each capture session, the contents of each Pi card were transferred across to a Windows PC via a microSD card reader through the free software ‘ext2explore’ (which took approx. 40 mins per 32gb card, 120 mins per 64gb card). After that data had been copied over, and prior to the next capture session, each card was booted into a camera-less Pi (i.e. so the automatic timelapse capture could not run) for those image files to be manually deleted in order to free up storage space (which took approx. 5 mins per card). (I have no doubt that there will be faster and more efficient ways of achieving data transfer and wipe, e.g. via SSH, but it was beyond my skill and knowledge at the time.)

I needed a non-destructive method for attaching each Raspberry Pi to the rig, so I mounted each Pi onto a plastic board (a.k.a. a chopping board cut into smaller pieces). Then I stuck some sticky-backed hook+loop (i.e. velcro) onto the back of that plastic board, for attaching to the corresponding location on the rig head … however, the sticky-backed element kept on unsticking from the plastic backing, so I attached some card loops to the plastic backing, then stuck the hook+loop to that card. The whole thing is held together by friction, both in the sense of the hook+loop and the card loops – but after that initial revision it thankfully has maintained cohesion!

The standard v2 cameras were secured to the Pi Zero boards via zip ties (which is undoubtedly a terrible idea – please do not replicate this step as it will probably damage the Pi or camera parts!). The smaller headed wide-angle cameras were each sheathed in a piece of card that was folded over and stapled, again that card intervention was to facilitate the sticky-backed hook+loop attachment.

Rig:

- polystyrene ball (the ‘head’), 15cm diameter, purchased from a craft store

- thin lengths of wood (the ‘handle’), purchased from a hardware store

- a knitting needle (courtesy of my Mum), skewering a plastic food lid, then skewering down through the centre of the ball and protruding below, for attaching the ‘head’ to the ‘handle’

- a selection of binds, including:

- zip ties, for holding the 2-piece handle together and attaching the knitting needle to the handle;

- rubber bands, for routing the power cables down the handle;

- double sided hook+loop (i.e. hooks on front, loops on back of same strip) for cable routing and for securing a cowl (a.k.a. a plastic bag) over the rig head while in transit

- lastly, a dynamic enclosure for the power units (a.k.a. a toiletries bag in which the batteries were stuffed, suspended from around my neck by a length of string).

The choice of a sphere for the head was intentional, so that it would be possible in theory to position a camera at any desired orientation. The flat-backed camera (a straight line) would intersect with the sphere (a circle) at its tangent, and the sphere is a circle on every axis so that intersection could in principle occur at any location on its surface. With the hook+loop attachment, this offers a (low-tech) ‘infinite variety’ of camera orientations.

The Raspberry Pi camera unit comprises a camera, attached via cable to a computer, attached via cable to a power source. I originally investigated the use of camera extension cables, namely 2 metre cables between each camera and its corresponding computer. All this so it would be possible to have a multi-cam head with small dimensions (e.g. 8 sensors on a 5cm sphere, preparing the ground for 24+ sensors attached to a 30cm sphere, mountable atop an autonomous terrestrial vehicle and/or underside an autonomous aerial vehicle to facilitate rapid surveying). This was fine for one camera, unfortunately for cable routing purposes when 8 of those 2m cables were clumped together this resulted in significant signal interference. It would be possible to counter that interference through superior cable management (i.e. not just bundling them all together and running them down the handle in a single clump). Due to time constraints I opted to directly attach the computer+cameras to the rig head, and instead employ lengthy power cables so at least the weighty power units could be decentralised from the rig head+handle.

Capture Method

Attempt 1:

- power on all cameras (thereby starting continuous capture)

- keep cameras covered by cowl until arrive at first capture position, then remove cowl

- stand in first position, rig raised above head, internally count to 10 seconds, then advance to next capture position, count to 10 seconds, move to next position, etc

- advance across the area in a trajectory of rows+columns:

- if position01 is 0x0y, position02 will be 1x0y, position03 will be 2x0y etc.,

- then (eventually) take a sidestep and start moving backwards, so position11 will be 10x1y, position12 will be 9x1y, position13 will be 8x1y.

Attempt 2 (same as attempt 1 except):

- instead of counting to 10 internally, listen to looping audio track that emits a beep every 6 seconds (1 second to change position, 5 seconds to stand still)

- for every change in position, rotate the camera rig by +45d on the vertical axis, then by -45d for the subsequent position, alternating the rotation back and forth every second position (so that position01 has regular cameras oriented to N/E/S/W and wide angle cameras oriented to NE/SE/SW/NW, then position02 has regulars oriented to NE/SE/SW/NW and wides oriented to N/E/S/W, and so on).

The audio track mentioned above (comprised of a double-beep followed by 5 seconds of silence) was authored in Audacity (Generate>Tone, Sine/440/0.8/0.4s, then manually copy-pasted to form the double-beep), then exported to my Android smartphone as an MP3, where I used Vanilla Music Player to listen to that ‘6 second’ track in a continuous loop for the duration of each subsequent capture (so I continuously listened to that beep track for a cumulative ~10 hours). Here is a screenshot of the audio track being authored in Audacity:

Later attempts used pretty much the same method with a few amendments:

- supplanted 1m power cables with 2m, to give greater range to the camera rig and therefore greater flexibility in its articulation (otherwise the rig movement was constrained by being tethered, via the 1m cables, to the heavy power packs which I was carrying on my person)

- supplanted the 32GB microSD cards with 64GB, thereby making it possible to stay in the field for up to 7 hours, in the process capturing over 100,000 photos, up from the 3.5 hours/50,000 5MP photo capacity of the 32GB cards.

The Howff can be intuitively divided into 10 ‘blocks’ with reference to its network of paths (at least, that is what I have been doing!). Photo capture proceeded with reference to those blocks, as in, for a given capture session I tried to capture one or two discrete blocks. Efforts were made during each capture session to try to divide up the data into manageable chunks, specifically to divide up each block into a set of sub-blocks.

The problem is that my desktop computer (with spec. equivalent to a mid-range gaming PC but with added RAM) can manage maybe 10,000 photos per photogrammetry file. (This constraint is determined by the system resources, specifically the RAM, used during the SfM stage). Even that is pushing it because it is more efficient to process those 10,000 as a set of smaller files, for example 5x 2,000 … at which point the problem becomes how to efficiently merge those 5*2,000s back into a unitary 1*10,000 (which, to be clear, is entirely possible, just more manual-labour intensive compared to the 1x 10,000 option). The way I was surveying the Howff meant that I was generating more than 15,000 photos per block.

With the benefit of hindsight, what I should have done in advance was develop an efficient method for splitting up the data during capture, for example by dividing up the area into a series of overlapping 3,000 photo patches. But I never managed to efficiently achieve that, and ended up resorting to capturing each block as a >15,000 photo set, which I then let the software chunter through. To be clear, the software capable of working with huge data sets (e.g. tens of thousands of photos), provided the processing computer is up to the task. However I was working to a deadline, and due to a combination of the SfM processing duration and my sub-optimal data collection/organisation, I ran out of time for processing all the >150,000 photos that were captured over the course of the week.

Improvements (if I was doing it all again, here are some obvious opportunities for improvement):

- photo capture activated by button press, not timelapse script;

- camera rig attached to gimbal;

- tight adherence to a rigorous fore-planned capture trajectory;

- integration and synchronisation with a Raspberry Pi GNSS unit (e.g. Aldebaran by Dr Franz Fasching) or integration of GPS at least;

- there are no doubt many other opportunities for improvement!

The 3D model

I have two goals for the final 3D model:

- high resolution 2D ground plan, to serve as a base layer in a 2D visualisation of the location of the ~1,500 funerary monuments that are present in the Howff;

- medium resolution 3D model, to serve as a base layer (skeleton, canvas) for attaching individual high resolution DSLR+ scans of each monument.

The Howff is an old burial ground in Dundee city centre that was sepulchrally active from medieval times until fairly recently, and is populated by around 1,500 separate gravestones and tombs. A graveyard is a microcosm of the built human environment, incorporating a mixture of organic and human-made structures and materials. As a technical challenge, the prospect of the digitisation of the Howff is a tantalising opportunity to experiment, research and develop a set of production methods that could be scaled up and applied to other human-nature composites, for example whole towns or cities.

By ‘digitisation’ is meant the reproduction of the physical world as a 3D model, but also – more significantly – the analysis and indexing of all the data embedded into or associated with that physical artefact. For example: any given gravestone is built to a specific design, incorporating named architectural features; the gravestones at the Howff often incorporate symbols that signify which of the ‘nine trades’ the grave occupant was associated with; the gravestone is inscribed with biographical information; and so on. In this sense, the 3D scanning of a real world subject such as Dundee Howff is only one part of a larger complex process, but hopefully a useful part that can facilitate the wider effort!

To that end, I will be gifting the assets I have generated through this experiment to the Howff community group, namely the Dundee Howff Conservation Group SCIO (on Facebook, on Sketchfab).

Excellent stuff. Have you considered global shutter cameras ? They may remove the need to be stationary.

Can I please ask you what software you use to create the model from the images ?

I have heritage/archaeology and utility (trench) projects where this could be really useful. I have been looking at using StereoPi with an imu to provide orientation of the images.

The Nvidia Jetson Nano can have multiple cameras attached.

Thank you for the hardware suggestions and mentions – global shutter cameras, StereoPi and imu, Nvidia Jetson Nano – that is informative to hear and worth further study!

I have not heard of global shutter cameras but if they removed the need to be stationary then that would really help increase the capture rate.

At the time of performing this project I was using 3DF Zephyr. Presently I am looking at alternatives and benchmarking a number of different softwares, including COLMAP, Agisoft Metashape, RealityCapture. (Here is my spreadsheet, in the process of being filled out – https://docs.google.com/spreadsheets/d/1SXJ-Ng8-xvKwk4cYw6SCTetxqIaDBCo6EV8ULBOcCVM/edit?usp=sharing ). I am still evaluating the most efficient way to process all the data, plus e.g. considering if there would be a more clever way of capturing the data that would reduce (photogrammetry) production time in later stages.

A concise guide to photogrammetry is soon to be published on this website, plus a series of short videos (<60s) showing how to get started with the respective photogrammetry softwares. All that should be going live within a fortnight, so if that could be helpful then stay tuned! Also worth mentioning that a great source for information on photogrammetry software is the blog of Peter Falkingham, https://peterfalkingham.com/blog/ , e.g. https://peterfalkingham.com/2020/07/10/free-and-commercial-photogrammetry-software-review-2020/

Regarding image orientation, that is useful to hear about the StereoPi with an imu. I have limited experience of Pi hardware and have approached this project as someone well versed in photogrammetry but purely winging it when it came to choice and design of Pi hardware(!). Also, my previous experience in photogrammetry is exclusively with 'dumb' photos, 'dumb' in the sense of no location or orientation data, plus these photos were captured serial (not parallel/stereo). If there was a way of embedding orientation data in a way that was useful to the photogrammetry (or other reconstruction) software then that would surely massively help the reconstruction. I have considered looking into the Pi Sense HAT for recording rig orientation – then, if the rig frame was rigid and had multiple cameras with quantified positions+orientations, it would surely be possible to extrapolate that position+orientation data for each camera, which in theory would massively speed up the SfM stage in photogrammetry.

I had not heard of the Jetson so that was informative to hear, thanks for sharing.

In order to process all the data, did you think about Meshroom? https://alicevision.org/#meshroom

It’s a Free and Open Source Software, and there is a very interesting option called “live reconstruction”. If you can synchronize your camera storage with a computer where Meshroom is running, each time a new picture is coming to the folder, it will be aligned.

I never used this tool, I only saw it and was enthusiastic about it 🙂

I am very interested in the way to create this kind of rig. I am also dealing with archaeological stuff, and it could be usefull to record in a more easier way dolmens for instance if we can add some spotlight to the rig.

Thank you for your comment, Meshroom is a great suggestion!

I have some previous experience with that software, e.g. this collection from a couple of years ago on my Sketchfab: https://skfb.ly/6Wn8C . More recently I have casually tried out Meshroom on a few occasions, but the processing times for both the SfM and MVS stages seems to be exceptionally long when compared to commercially available software – however, I have yet to take a deep dive into Meshroom to find the fastest and/or most efficient settings, so I cannot speak of its comparative performance with absolute certainty. That is exciting to hear about the “live reconstruction” option, it sounds like a useful tool.

That is interesting to hear about your archaeological subjects – I am sure you would be able to design a rig with an integrated light source, whether that light was continuously emitting, or only emitting prior to or simultaneous with photo capture, and if the emitting illumination was of a specific type for any reason (UV/infrared/’regular’). No doubt the Pi and Photogrammetry community would have great interest in such a rig, if you proceeded with that I and others would look forward to seeing and hearing how it goes!

I’m interested to know how the data set was scaled/coordinated.

For scaling, the 3D model was cross-referenced with copyright-expired Ordnance Survey map data (e.g. https://maps.nls.uk/geo/explore/print/#zoom=18&lat=56.46167&lon=-2.97240&layers=170&b=3). This, in conjunction with eyeballing (i.e. guessing) the levelling of the model with reference to parts within that should be inherently level (e.g. the perimeter wall). So in other words, the scaling/coordinating was not terribly accurate, or at least, in its present form the workflow has room for significant inadvertent errors.

A simple way of rectifying this would be to record position data (latitude longitude altitude) for at least 3 evenly spread points in the area under reconstruction, e.g. for this Dundee Howff area, north gate, south-west gate, south-east corner. Then (assuming the validity and integrity of the 3D model in itself) coordinate the 3D model with reference to those points.

Alternatively, recording position data for each photo captured via e.g. the integration of a GPS or GNSS module into the rig, is the type of data that could be directly used by the photogrammetry software to produce a model that was georeferenced from the start.